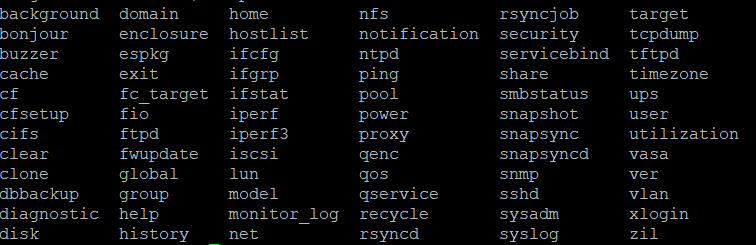

If you’re comfortable accessing your NAS via SSH and the command line, there are a couple of things you might like to check. None of this involves making changes - you will just be using a console interface to query the network stack.

The first is

cat /sys/class/net/eth0/speed

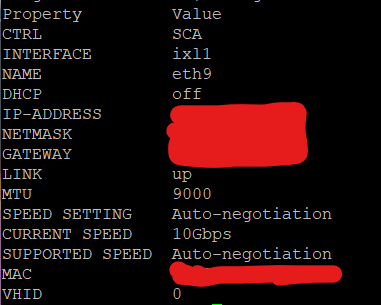

[If you are not using your eth0 network port, you might need to change that value in the above command to the port you are using. ] I have a TVS672XT, which has a native 10Gb port, so when I perform the above I see “10000” in response. That will give you confidence that your network adapter in your NAS, at least, really is running at 10Gb and hasn’t stepped down because of other [i.e. external] issues.

Another command you can try is:-

ifconfig -a

You’ll get quite a bit of output from this, but it’s reasonably easy to see that it’s broken down in to blocks for each physical/virtual adapter. If you can find the default adapter [typically eth0], you can have a look at the 3rd and 4th lines of output, which is where you’ll get an indication of the number of errors or dropped packets. Here’s what I get on my 672 when I try that command:-

eth0 Link encap:Ethernet HWaddr 24:5E:BE:53:7F:06

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:222358095 errors:0 dropped:161 overruns:0 frame:0

TX packets:372509639 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:230092094401 (214.2 GiB) TX bytes:455412818198 (424.1 GiB)

As you can see, the adapter has dropped 161 packets [in 31 days, since it’s last reboot]. If you find a large number of errors or dropped packets, that might be indicative of a deeper problem.

Finally, and I appreciate this might not help tell you the exact problem, but it might eliminate protocol-related challenges, if you have access to a unix or linux host, you could activate the NFS networking protocol and test performance over NFS rather than CIFS.

Microsoft Windows 11 Pro does have integrated support for NFS, but it needs to be activated before it can be used. Google for instructions… Once you have a working NFS client on your workstation and have activated NFS via Control Panel, you should now be able to perform side-by-side testing using two different network protocols. If you see a delta here, then that rather suggests a protocol error, whereas if you get the same or very similar results, that rather suggests that your problem is in the transport or physical layers of your connection…

Finally, your initial issue description suggests that performance started OK but then deteriorated. If the change was abrupt, that might have been a consequence of a change made somewhere - “change” being a loose term and could mean “someone moved a cable and left a marginal connection”… or it could mean an actual technical configuration change. If the change was a slower degradation, that might suggest a slow drain on resource, a memory leak, or something along those lines. You might be able to eliminate that possibility by rebooting all the devices [NAS, hosts and network gear] in the circuit. Again, this might not find the problem, but it might help narrow your search area…